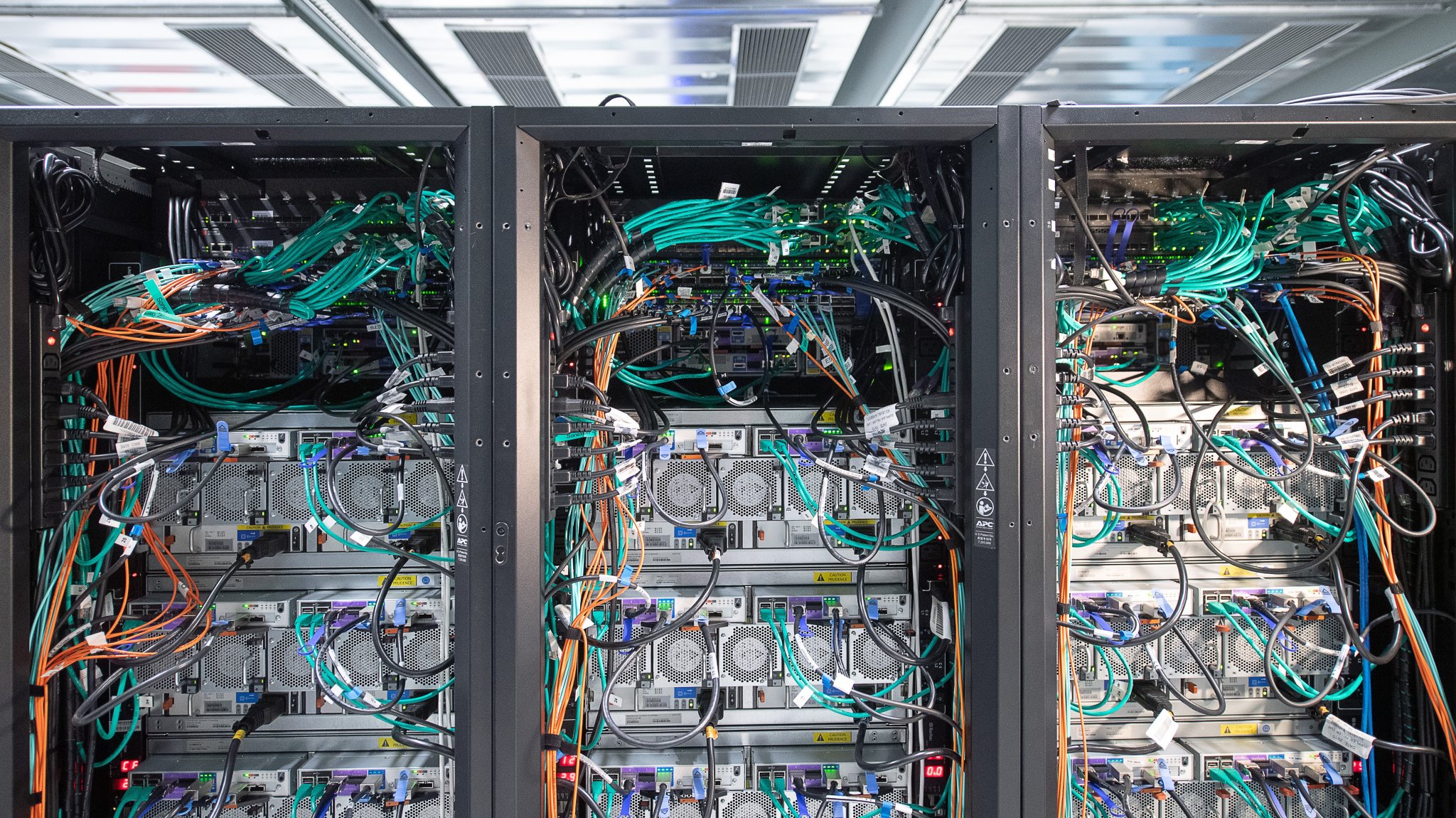

Symbol Alliance/Getty Photographs

Researchers are starting to get to the bottom of one of the crucial greatest mysteries in the back of the AI language fashions that energy textual content and symbol date gear like DALL-E and ChatGPT.

For some day now, gadget studying mavens and scientists have spotted one thing strange about immense language fashions (LLMs) like OpenAI’s GPT-3 and Google’s LaMDA: they’re inexplicably just right at acting duties they weren’t particularly educated to do. It is a complicated query and only one instance of why, typically, it may be tough, if now not inconceivable, to give an explanation for in component how an AI style arrives at its effects.

In a drawing close find out about to be revealed on preprint server arXiv, researchers from Massachusetts Institute of Era, Stanford College and Google are investigating this “apparently mysterious” phenomenon, dubbed “learning in context”. To perform a fresh process, maximum gadget studying fashions most often wish to be retrained with fresh knowledge, a procedure that most often calls for researchers to enter hundreds of knowledge issues to get the specified output — a tedious and time-consuming undertaking.

However with contextual studying, the gadget can discover ways to carry out fresh duties reliably from only a few examples, necessarily obtaining fresh talents at the fly. As soon as a language style is given a suggested, it will possibly take a listing of inputs and outputs and put together fresh, continuously right kind, predictions a few process it used to be now not explicitly educated to do. This kind of conduct bodes neatly for gadget studying analysis, and unraveling how and why it happens may lend precious perception into how language fashions be told and gather data.

However what’s the excess to a style that learns and now not simply memorizes?

“Learning is entangled with [existing] Know,” Ekin Akyürek, top writer of the find out about and a graduate pupil at MIT, instructed Motherboard. “We show that it is possible for these models to learn spontaneously from examples without us applying a parameter update to the model.”

That suggests the style isn’t simply copying coaching knowledge, it’s most probably development on prior wisdom, similar to people and animals would. The researchers didn’t take a look at their concept with ChatGPT or any of the alternative pervasive gadget studying gear that the community has been so enthusiastic about in recent years. Rather, Akyürek’s workforce labored with smaller fashions and more effective duties. On the other hand, being the similar form of style, their paintings offer insights into the basics of alternative, extra chief programs.

The researchers carried out their experiment via giving the style artificial knowledge or activates that this system may by no means have viewable prior to. However, the language style is in a position to generalize and upcoming extrapolate the information from it, stated Akyürek. This led the workforce to hypothesize that AI fashions that exhibit contextual studying are in truth development smaller fashions inside themselves to perform fresh duties. The researchers have been in a position to check their concept via examining a transformer, a neural community style that applies an idea referred to as “self-awareness” to trace relationships in sequential knowledge akin to phrases in a sentence.

Via staring at it in motion, the researchers discovered that their transformer may write its personal gadget studying style in its secret states, or the territory between the enter and output layers. This means that it’s conceivable, each theoretically and empirically, for language fashions to “invent well-known and well-studied learning algorithms” apparently all via themselves, Akyürek stated.

In alternative phrases, those better fashions paintings via developing and coaching smaller, more effective language fashions internally. The idea that is more straightforward to know should you recall to mind it as a matryoshka-like computer-within-a-computer state of affairs.

Relating to the workforce’s findings, Fb AI Analysis scientist Mark Lewis stated in a commentary that the find out about “is a stepping stone to understanding how models can learn more complex tasks and will help researchers develop better language model training methods to enable their… to further improve performance. ”

Era Akyürek is of the same opinion that language fashions like GPT-3 will perceivable up fresh probabilities for science, he says they have got already modified the way in which people retrieve and procedure data. While typing a suggested into Google impaired to simply retrieve data, and we people have been chargeable for opting for (learn: clicking) which data used to be highest for that request, “GPT can now retrieve the information from the web, but also process it.” [it] for you,” he instructed Motherboard. “So learning how to trigger these models on data cases that you want to solve is very important.”

In fact, departure the processing of knowledge to automatic programs brings with all of it forms of fresh issues. AI ethics researchers have time and again proven how programs like ChatGPT reproduce sexist and racist biases which can be tough to mitigate and inconceivable to eliminate solely. Many have argued that once AI fashions achieve the scale and complexity of one thing like GPT-3, it’s merely now not conceivable to restrain this harm.

Even though there’s nonetheless a lot lack of certainty about what presen studying fashions can do, or even what tide fashions can do these days, the find out about concludes that contextual studying may in the end be impaired to resolve most of the issues that gadget studying researchers are suffering to resolve no uncertainty face down the street.

Supply: www.vice.com

Don’t miss interesting posts on Famousbio